- Blog

- Genogram template word social work

- Gta 6 leaks gameplay video

- Time zone usa map

- Concrete block for sale near me

- Patriots live stream online free

- Multiplication worksheets 6 and 7 times tables

- Log cabin mobile homes in tn

- Best ever email signature examples

- Formula for a triangular prism surface area

- Eraser file shredder windows 7

- Redeem code for imvu 2018

- Free machine quilting open meandering pattern

- Professional plumbing invoice templates

- Small block chevy stamped number identification

- Desktop clock windows 10

- Bias amp 2 standard

- Sewage ejector pump for septic system cost

- Good synth vst free

- Online classic solitaire games free

- Pokemon fire red randomizer seed

- Weight loss tracker journal template

- Driver software for afterglow xbox one controler

- Playboy free porn site list

- Professional services invoice template word

- Youtube logo makers

- South korea spy cams toilet int

- Live2d template preview not working

- Blood type diet o negative sesame seeds

- Example of a genogram in social work

- Redacted console commands zombies

- Referees hand signal in volleyball

- Hypertension genogram 3 generations example

- Infinity in usb 2 driver download

- Autotune 8 efx crack aax

- Capture one won-t let me apply presets

- Setting up monthly money expense spreadsheet

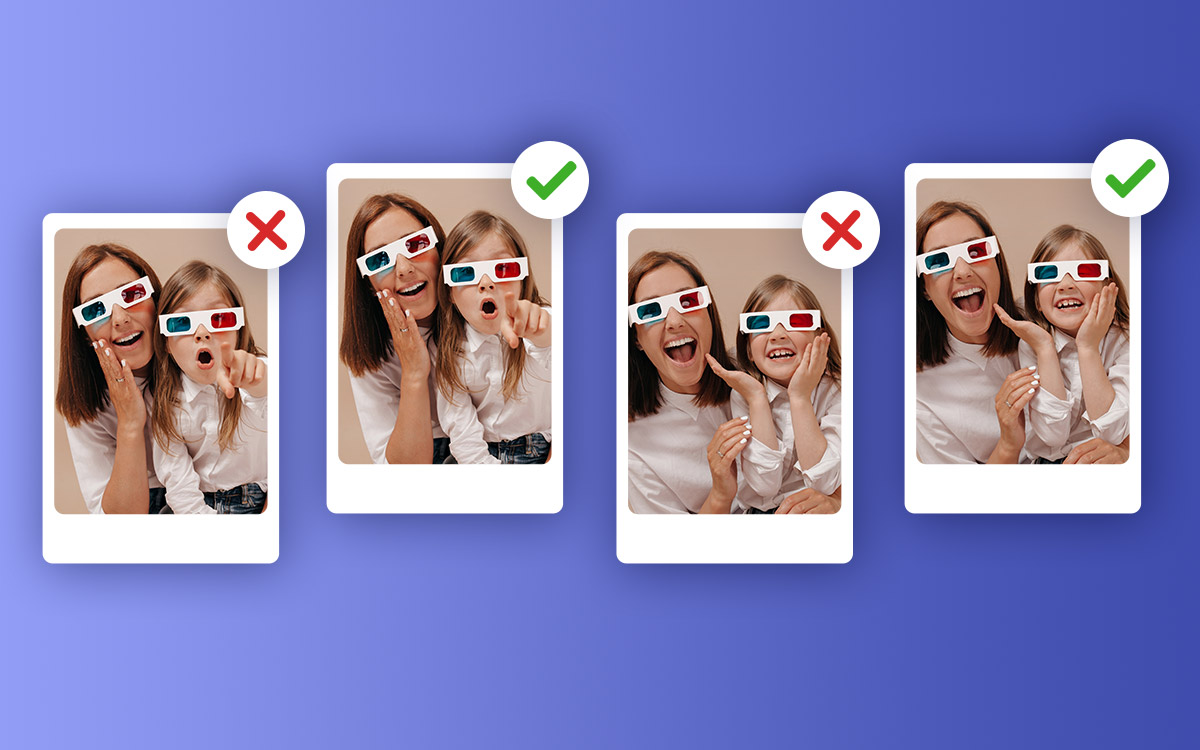

- 2018 best free photo duplicate finder

- Airplane printable coloring pages

- Bing wallpaper windows 10 lock screen

- List of free safe porn sites

- Lego youtube banner 2048 x 1152

- 30 60 90 day plan job interview

- King charles cavalier spaniel rescue

- Whatsapp desktop window 7

- Ghost of tsushima interactive map

- Remove wallpaper from lath and plaster walls

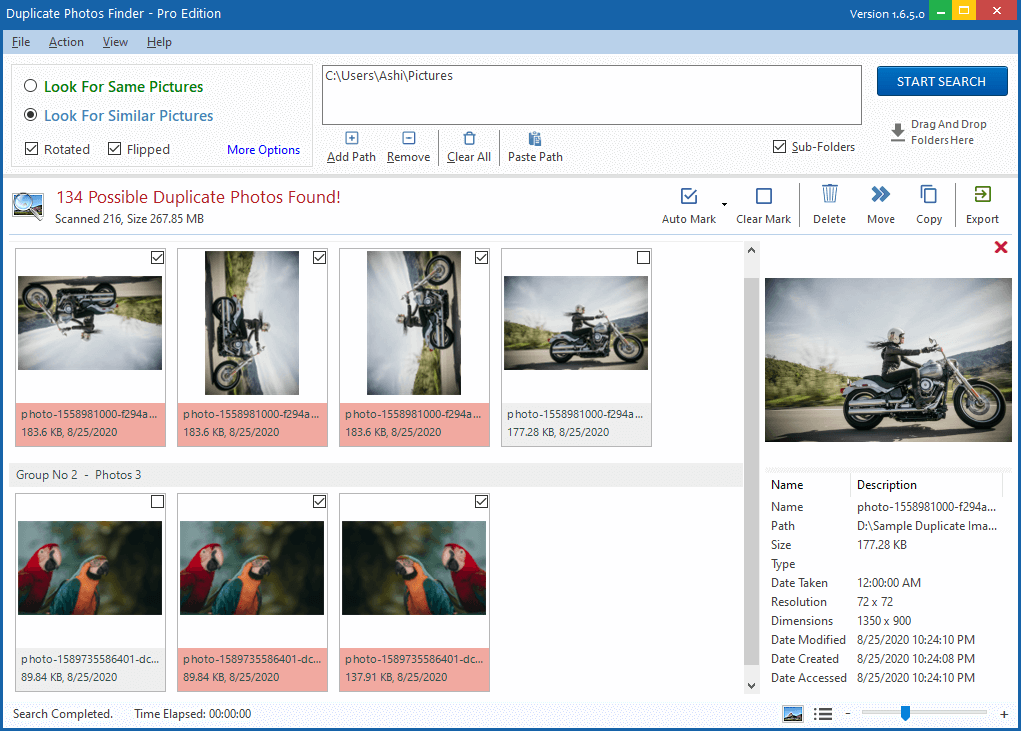

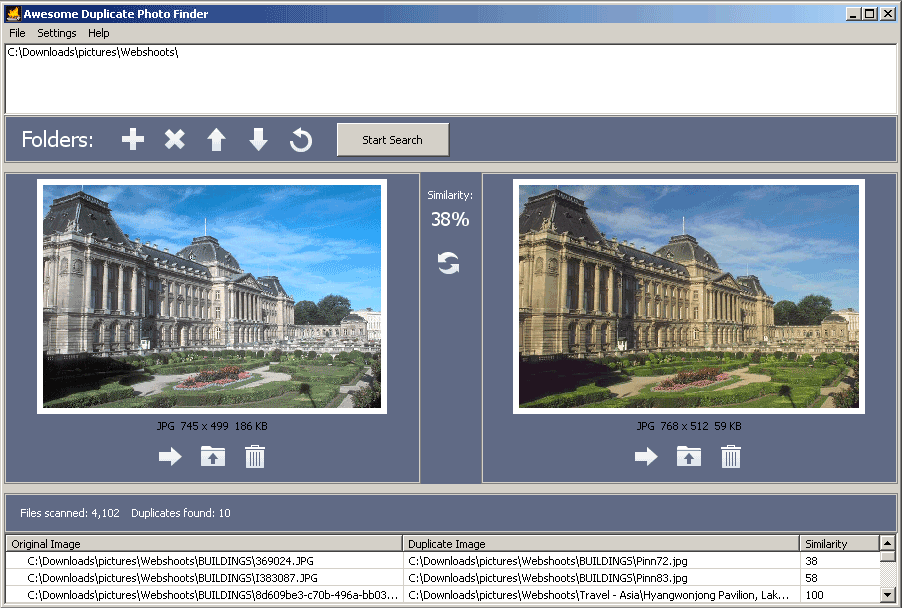

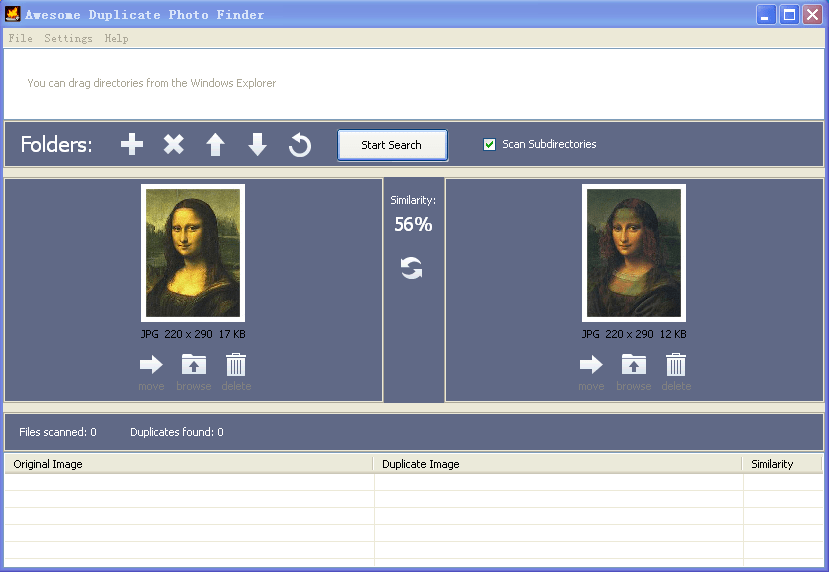

There are also multiple ways to filter and sort your results to easily weed out false duplicates (for low threshold scans). Not only can you delete duplicates files dupeGuru finds, but you can also move or copy them elsewhere. Its reference directory system as well as its grouping system prevent you from deleting files you didn’t mean to delete.ĭo whatever you want with your duplicates.

Its engine has been especially designed with safety in mind. The Preference page of the help file lists all the scanning engine settings you can change.ĭupeGuru is safe.

You can tweak its matching engine to find exactly the kind of duplicates you want to find. It has a special Picture mode that can scan pictures fuzzily, allowing you to find pictures that are similar, but not exactly the same.ĭupeGuru is customizable. It has a special Music mode that can scan tags and shows music-specific information in the duplicate results window.ĭupeGuru is good with pictures. dupeGuru not only finds filenames that are the same, but it also finds similar filenames.ĭupeGuru is good with music. Find your duplicate files in minutes, thanks to its quick fuzzy matching algorithm. dupeGuru runs on Mac OS X and Linux.ĭupeGuru is efficient. The filename scan features a fuzzy matching algorithm that can find duplicate filenames even when they are not exactly the same. It can scan either filenames or contents. On Linux & Windows, it’s written in Python and uses Qt5.ĭupeGuru is a tool to find duplicate files on your computer. On OS X, the UI layer is written in Objective-C and uses Cocoa. It’s written mostly in Python 3 and has the peculiarity of using multiple GUI toolkits, all using the same core Python code. If that's required, I don't want one that automatically deletes duplicates, I want to pick which ones are required, but if you have any recommendations of on that is easy to use and pretty much straight forward (nothing fancy required, just find the duplicates (or those that may have a similar name since sometimes they get saved with slightly different titles), I'd appreciate the help.Windows (圆4) Windows (x32) Ubuntu (x32, 圆4) macOS (10.12+) Source (zip) Source (tar.gz)ĭupeGuru is a cross-platform (Linux, OS X, Windows) GUI tool to find duplicate files in a system. Can you download file titles and locations to a spreadsheet? At least that would let me filter/sort and then go back and find the ones I want to delete. So is there a simple Dropbox search function for duplicates, or do I have to download some kind of app that will do the job. If I search specifically by name it shows me all of the versions and then I can delete the ones that are in the "wrong" place, but I don't have the time or inclination (despite my love of procrastination by rearranging files) to search 1000 titles. So there could be anywhere from 1-4 version of a book floating around.

(No, I don't use the dreaded Kindle thanks.) So I may download it in a "holiday reading" folder, then I may also download in the "Publishers folder" and at some point I may have reviewed it and moved it to the "author" folder and then maybe I have forgotten that I downloaded it in the "lost interest in this" folder. This is not really a space issue, I have lots, but I have nearly 1000 ebooks that I sometimes file in multiple places.